To scrape leads with AI, you can use a no-code tool like a Chrome extension to automatically scan a webpage, identify contact profiles, and pull them into a clean, organized list. Instead of manually copying names, job titles, company details, and contact data into spreadsheets, sales teams, recruiters, and marketers can build structured lead lists in minutes and move them into outreach or CRM workflows much faster.

A modern lead scraping workflow is not just about collecting names from a webpage. It is a repeatable system for finding, extracting, organizing, enriching, and activating lead data for sales, recruiting, and marketing.

This article is part of our broader guide to lead scraping. If you want the complete end-to-end workflow—including lead sources, scraping methods, enrichment, data quality, CRM activation, and compliance—see: Lead Scraping Guide: How to Scrape, Enrich, and Convert Leads at Scale.

The Traditional Way vs. the AI Way

The traditional way of gathering leads is slow, repetitive, and prone to mistakes. Manually highlighting a name, copying it, pasting it into a spreadsheet, and repeating that process dozens of times creates unnecessary friction for teams that need to move quickly.

The goal today is speed and accuracy, which means automating the most time-consuming parts of prospecting.

This is where the ability to scrape leads with AI changes the workflow. Instead of treating a webpage as unstructured text, an AI scraper can recognize patterns in names, titles, companies, and contact details, then extract that information into a structured list.

Why Manual Lead Collection Slows Teams Down

Building a lead list by hand is not just inefficient; it creates a bottleneck. Every minute spent on data entry is a minute not spent on outreach, research, qualification, or closing deals.

The limitations of the manual approach are easy to see:

- It’s slow: Copying and pasting just 50 profiles from a directory can easily consume a large part of your day.

- It leads to errors: Typos, inconsistent formatting, and missed details can affect data quality from the start.

- It does not scale well: Building a targeted list of 1,000 prospects manually is difficult and inefficient.

A structured workflow also helps solve three common problems at once: poor data quality, a hard ceiling on how many leads a team can process manually, and too much time spent on administrative work instead of outreach.

The Modern, One-Click Workflow

The difference between manual lead collection and AI-assisted extraction is substantial. Here is a simple comparison of the two approaches.

Manual Lead Scraping vs AI-Powered Scraping

| Feature | Manual Scraping | AI-Powered Scraping (with ProfileSpider) |

|---|---|---|

| Speed | Slow for larger lists | Fast extraction from a single page |

| Accuracy | Prone to human error | Structured data recognition reduces manual mistakes |

| Scalability | Difficult to scale | Better suited for larger lead-building workflows |

| Data Format | Often inconsistent and requires cleanup | Clean, structured, and export-ready |

| Effort | High-effort and repetitive | Low-effort, streamlined workflow |

| Focus | On data entry | On qualification, outreach, and strategy |

AI-powered tools like ProfileSpider simplify the process. After installing a browser extension, you can navigate to a list of conference speakers, a company team page, or a professional directory, and extract structured profile data in a few clicks.

For sales teams, this means turning public webpages into structured prospect lists that can be reviewed, enriched, tagged, and exported for outreach. For recruiters, it means moving from manual sourcing to a faster, more repeatable workflow.

These tools do not simply copy visible text. They help identify profile-level information, distinguish between people and companies, and organize the extracted data into a format that is easier to manage. For a deeper dive, check out our guide on the power of an AI scraper.

Step 1: Install Your AI Scraping Tool

Before you can start pulling in leads with AI, you need the right tool. The best modern AI scrapers are designed for speed and ease of use, usually as lightweight browser extensions that can be installed quickly.

We’ll use ProfileSpider as the main example here because it reflects the kind of setup many no-code scraping tools offer for sales teams, recruiters, and marketers.

Your One-Click Scraper Install

Most no-code scraping tools are available as Chrome extensions, which means there is no heavy software installation or technical setup.

The installation process is simple:

- Go to the tool’s page on the Chrome Web Store.

- Click Add to Chrome.

- Approve the requested permissions so the tool can read page content and extract profile data.

Once installed, your browser is ready to scan pages and extract leads from relevant sources.

Here’s a look at the ProfileSpider extension page on the Chrome Web Store.

The clean interface and positive user feedback help communicate that the tool is built for quick onboarding and practical use.

A Quick Look at Your New Dashboard

After installation, the extension icon appears in your browser’s extension bar. The interface is designed to stay simple. In a tool like ProfileSpider, the main action is straightforward: Extract Profiles.

Older scraping workflows often required field mapping or page-specific configurations. AI-powered scrapers reduce that setup work by analyzing the page structure automatically and identifying which elements represent professional profiles.

Key takeaway: Your job is not to configure a complex extractor. Your job is to identify strong lead sources. The tool handles much of the extraction logic so you can focus on targeting and qualification.

Before you begin, it helps to create your first contact list. These lists act like project folders for keeping campaigns organized. A recruiter might create lists like Senior Python Developers Q3 or UX Designers – Bay Area. A sales rep might create SaaS Founders – NYC or Marketing VPs – Prospect List.

Creating a list before extraction keeps new leads organized from the start and makes downstream filtering, tagging, and exporting much easier.

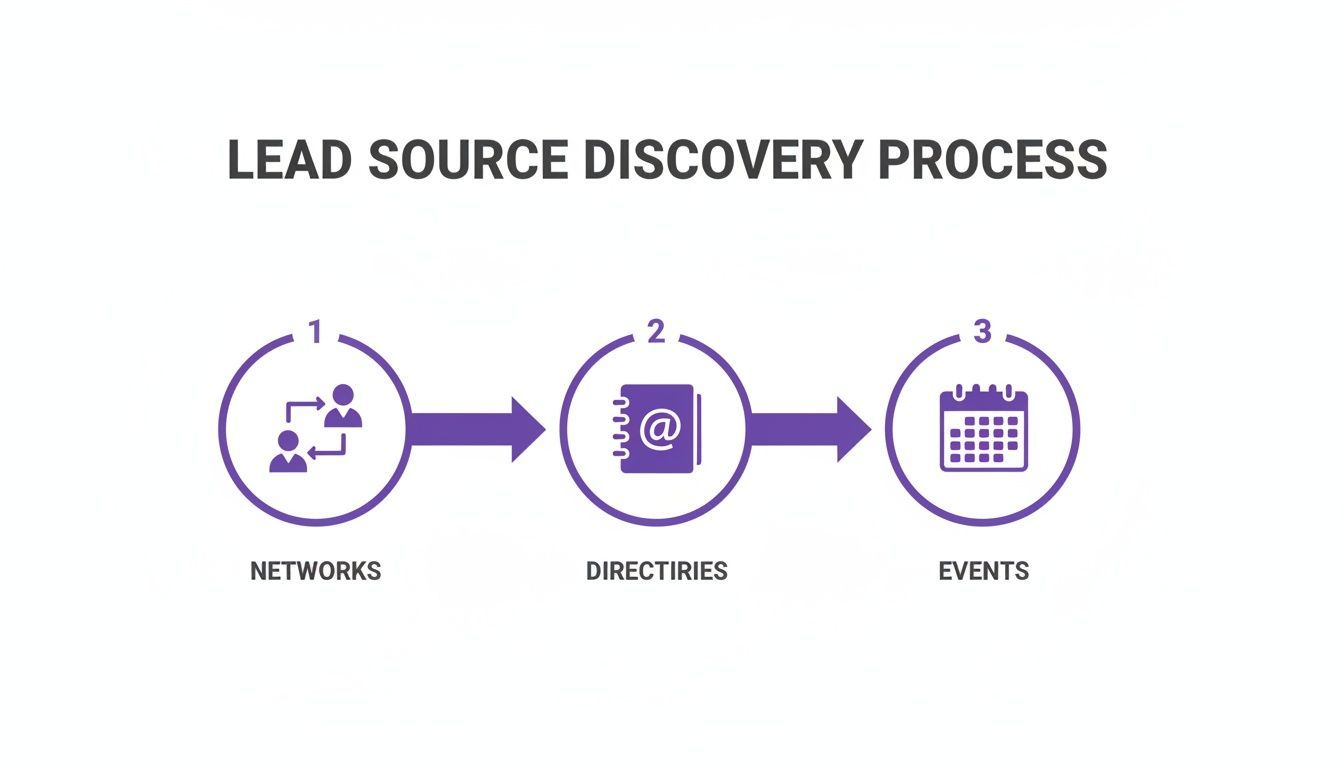

Step 2: Identify High-Value Lead Sources

Once the tool is ready, the next step is deciding where to use it. The quality of your results depends heavily on the quality of your lead sources.

Your success comes from finding the websites where your ideal prospects, candidates, or partners already appear. That is where prospecting shifts from setup into strategy.

Look Beyond the Obvious

LinkedIn is a common starting point, but your best leads are not only there. Many valuable prospects also appear in less crowded places across the public web. These sources often provide stronger context and lower competition than mainstream platforms alone.

Here are several high-value lead sources worth exploring:

- Industry-specific directories: Directories for consultants, agencies, specialists, or certified professionals often contain highly relevant leads.

- Conference speaker and attendee lists: Event websites frequently publish speaker pages and sponsor lists that surface active, engaged professionals.

- Company About Us and team pages: Company websites often list key people with exact titles, making them useful for account-based sales and targeted recruiting.

- Niche online communities and forums: Specialized communities can reveal people actively discussing the exact problems your product or service solves.

- Google Maps results: Local business searches can surface a clean set of companies for region-specific prospecting.

By expanding beyond the most obvious platforms, you can uncover lead sources that are both relevant and underused.

Putting It Into Action: Real-World Scenarios

Here is how different teams might use an AI scraper like ProfileSpider in practice.

Scenario 1: A Recruiter Sourcing Engineers

A recruiter needs to find senior backend engineers with Go experience. Instead of relying only on LinkedIn searches, she looks at a developer conference website and opens the Speakers page.

That page already contains a concentrated list of relevant professionals, including names, companies, and talk titles. She opens the extension, clicks Extract Profiles, and saves the results into a list for review.

She can then tag those leads with labels such as GoCon-Speaker and Senior-Engineer, making future filtering easier.

In a few minutes, she has a focused sourcing list built from a highly relevant public source that would have taken much longer to compile manually.

Scenario 2: An SDR Targeting Marketing Leaders

A sales development rep is selling an analytics platform to marketing leaders at B2B SaaS companies. Instead of relying on a single platform, he searches for public lists of award winners, conference speakers, and company leadership pages.

When he finds a page listing notable marketing leaders and their companies, he uses ProfileSpider to extract the names, job titles, and organizations into a structured list.

Because the data is organized from the start, he can review which contacts align with his ideal customer profile and prepare more relevant outreach.

This is one of the practical advantages of scraping leads with AI: sourcing becomes more contextual, and outreach becomes easier to personalize.

Step 3: Run Your First AI Scraping Campaign

Once your tool is installed and your target sources are identified, you can move from planning to execution. This is where a simple webpage becomes a clean, actionable lead list.

Imagine you are an SDR building a prospect list for a project management tool aimed at decision-makers in fast-growing startups.

From Target Page to Instant List

Your first source is a public directory featuring promising startups and leadership teams. Instead of manually copying details one by one, you navigate to the page, open the ProfileSpider extension, and select the contact list you created earlier.

Then you click Extract Profiles.

The tool scans the page, detects the profile structure, and organizes the extracted data into a list that may include names, roles, companies, and related details.

In many cases, the goal is not just to find one person. Extracting multiple profiles from the same company, event, or directory can help you map an account more effectively by identifying decision-makers, influencers, and other relevant stakeholders.

This turns source discovery into a repeatable workflow: find a relevant page, extract the profiles, review the results, and continue building the list.

Refining Your Data for Outreach

Raw extraction is a starting point. The next step is turning that list into something your team can actually use.

Begin by applying useful tags. In the SDR example, strong tags might include:

- Decision-Maker for VP- and C-level contacts

- Q4-Prospect for campaign planning

- Top-100-Startup to track the source

Tagging makes it easier to segment leads later for outreach, assignment, or export.

You can also add notes to preserve context. Notes like Met their CEO at SaaSCon or Company recently raised Series B make future outreach more informed and less generic.

Structured extraction also helps with qualification. Once names, titles, companies, and locations are captured in a consistent format, teams can quickly assess whether a lead matches their ideal customer profile before investing more time in research or outreach.

Pro tip: Run a duplicate check as you build lists from multiple sources. Merging duplicates early keeps your dataset cleaner and reduces the risk of repeated outreach.

Turning Browsing Into Productive Work

When teams browse LinkedIn, GitHub, company websites, or conference pages manually, much of that activity remains unstructured. An AI scraper helps convert that browsing into organized lead data that is easier to act on.

The value here is not only speed. It is the creation of a repeatable process: identify a strong source, extract profiles, tag and qualify the results, and move those leads into the next stage of your workflow.

Step 4: Enrich and Manage Your Scraped Leads

Extracting a list of names and companies is useful, but it is only the first step. To scrape leads with AI effectively, you need to improve the quality of the data and manage it in a way that supports outreach and follow-up.

Many raw lead lists are incomplete. You may have a name and title but no email, or a company and location but no direct contact path. Enrichment helps fill in those gaps.

From Raw Data to Enriched Profiles

Modern AI scraping tools are built to support this workflow. With a tool like ProfileSpider, enrichment can be used when a profile is missing important details such as an email address or social profile.

Instead of manually opening each result and researching it one by one, you can use the Enrich feature to scan the source more deeply and append missing data where available.

This makes the workflow more efficient by reducing the gap between extraction and outreach preparation.

Smart Contact Management Techniques

As lists grow, organization becomes essential. One large spreadsheet is difficult to maintain, segment, and act on. Managing contacts inside the scraping tool helps keep campaigns structured from the beginning.

Instead of storing everything in one place, create dedicated lists for each use case.

- For sales: Lists such as Q4 Enterprise Prospects, SMB Decision-Makers, or SaaS Conference Attendees

- For recruiting: Lists such as Senior Java Developers – NYC, UX/UI Designers – Remote, or Candidates from Competitors

This kind of segmentation makes it easier to apply tags, move contacts between workflows, and prepare lists for specific campaigns.

Bridging the Gap to Your CRM

The real value of scraping is not just collecting data. It is moving accurate, organized leads into your CRM or outreach workflow quickly enough to act on them while the information is still relevant.

The final step is activation. Once lead data is structured, enriched, and organized, it should move quickly into your CRM, ATS, or outreach workflow so your team can act on it while the information is still timely.

With ProfileSpider, you can export an entire list—or a filtered subset—into a CSV or Excel file. That makes it easier to import your data into common platforms such as Salesforce, HubSpot, Zoho, or an ATS.

You can also choose which columns to export so the file better matches your destination system’s import format. This reduces cleanup work and helps preserve data integrity.

To see how this workflow fits into a broader sales process, check out our guide on automating your sales pipeline by connecting web scraping with your CRM.

By following a workflow of extract, enrich, organize, and export, you turn public web data into qualified leads that are easier to activate.

Best Practices for Ethical and Compliant Scraping

Using AI to scrape leads can make prospecting more efficient, but it should be done responsibly. That means respecting the websites you use as sources, handling data carefully, and staying aware of applicable laws and platform rules.

The goal is to collect publicly available business information in a way that is practical, measured, and aligned with legitimate business use cases.

Respect the Source Website

A good rule is to treat source websites responsibly. Review their terms of service, avoid aggressive behavior that could strain their systems, and use the collected information appropriately.

Some core practices include:

- Check the

robots.txtfile: It helps indicate which areas of a site are intended for automated access restrictions. - Keep a reasonable pace: Avoid excessive request behavior that could disrupt the source website.

- Use data appropriately: Collected lead data should support relevant sales, recruiting, or research workflows rather than spam or misuse.

For a deeper discussion of the topic, see our guide on whether website scraping is legal.

Prioritize Data Privacy and Compliance

Privacy and compliance should be part of the workflow from the beginning. Tools that give users more control over how extracted data is stored can help reduce unnecessary exposure.

A local-first approach can simplify data handling by keeping extracted profiles under the user’s control instead of routing them through an external cloud workflow by default.

ProfileSpider is designed around this principle. Extracted data is stored in the browser’s local storage layer (IndexedDB), which gives users direct control over their lists, notes, and exports.

That does not remove the need to follow applicable laws, platform terms, and outreach rules, but it does give users tighter control over the data they collect and manage.

Use Results to Improve Future Scraping

Lead scraping becomes more effective over time when you treat it as a feedback loop. If certain job titles, industries, company sizes, or sources consistently produce better results, those patterns can help refine which pages you target and which leads you prioritize in future campaigns.

One of the most effective ways to improve lead quality over time is to use CRM outcomes as feedback. If certain prospect profiles repeatedly convert into meetings, opportunities, or hires, those signals can guide future source selection and qualification criteria.

This helps turn lead scraping from a one-time tactic into a system that improves with each campaign.