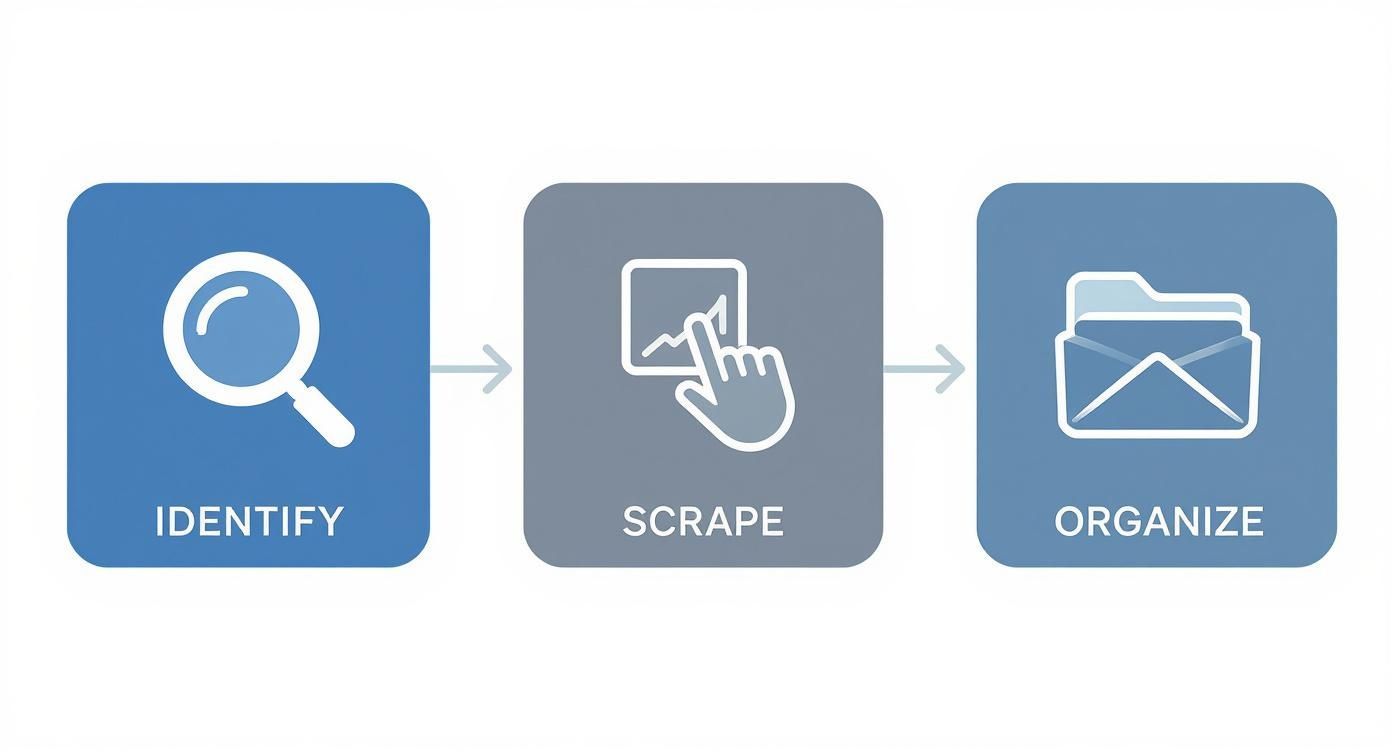

Feeding scraped lead data into your CRM is not just about saving time. It is about shortening the distance between finding a relevant prospect and getting that person into the system your team already uses for outreach, tracking, and follow-up. Instead of treating web research and CRM work as separate steps, you can turn them into one connected workflow built around faster lead capture and cleaner handoff.

In practical terms, that means collecting publicly available professional information from relevant web sources, organizing it before import, and moving it into your CRM in a format your sales team can act on immediately.

This article is part of our broader guide to lead scraping. If you want the complete end-to-end workflow—including lead sources, scraping methods, enrichment, data quality, CRM activation, and compliance—see: Lead Scraping Guide: How to Scrape, Enrich, and Convert Leads at Scale.

To see how this process fits into a broader automated workflow, read our guide to lead scraping automation.

If your main challenge is processing larger volumes of leads efficiently, read our guide to scraping leads at scale.

Why CRM Handoff Is Where Many Prospecting Workflows Break

Most teams do not struggle to find websites with useful lead data. They struggle with what happens next. A rep identifies a good source, collects information manually or semi-manually, drops it into a spreadsheet, and then delays the CRM update until later. By the time that data gets imported, it may already be incomplete, duplicated, or missing context.

This gap between discovery and CRM entry creates operational drag. It also introduces avoidable problems:

- Inconsistent records: Different reps may enter names, job titles, and companies in different formats.

- Missing context: The source page, campaign segment, or reason a lead was saved often disappears before the record reaches the CRM.

- Delayed follow-up: Leads remain stuck in private spreadsheets or browser tabs instead of entering the actual sales workflow.

When this handoff is weak, the problem is not just inefficiency. It is loss of momentum.

Manual Prospecting Creates CRM Friction

Manual prospecting is often described as a top-of-funnel problem, but it is equally a systems problem. Sales teams may find decent prospects, yet still fail to operationalize them quickly because the path into the CRM is too slow or too messy.

That friction usually shows up in familiar ways:

- reps postponing CRM updates until the end of the day

- lists being stored temporarily in spreadsheets instead of the main system

- duplicate or partial contacts being imported later without proper review

- campaign segmentation happening after import instead of before it

The result is a CRM full of uneven records, weaker reporting, and less confidence in pipeline data.

Why Better Input Improves Everything Downstream

A CRM is only as useful as the quality of the data entering it. If contact details are inconsistent, missing, or poorly organized, it becomes harder to segment lists, assign ownership, prioritize outreach, and measure campaign performance accurately.

This is why the collection stage and the CRM stage should be treated as one connected process. A cleaner upstream workflow improves not only the speed of lead capture, but also the usefulness of the records once they are inside the system.

Manual Prospecting vs Automated CRM-Ready Capture

| Activity | Manual Workflow | CRM-Ready Automated Workflow |

|---|---|---|

| Lead Capture | Collected one by one across multiple tabs and spreadsheets. | Captured in batches from relevant public pages. |

| Record Quality | Often inconsistent or missing fields. | More structured before import. |

| Segmentation | Usually done later, after import. | Can be handled before export with lists and tags. |

| Time to CRM | Delayed by manual cleanup and formatting. | Faster handoff through export-ready formats. |

| Data Hygiene | Duplicates and errors are easier to miss. | Review and cleanup can happen before import. |

| Sales Readiness | Requires more manual preparation after capture. | Better suited for immediate routing and follow-up. |

Moving away from a spreadsheet-first process is not just a convenience upgrade. It is a way to build a cleaner entry point into the CRM and reduce the amount of rework later.

A Better Model: Capture First, Structure Early, Import Cleanly

A more effective workflow does not start inside the CRM. It starts at the source page, where prospects are first identified. The goal is to capture relevant public data, organize it before export, and only then move it into your CRM with the right fields, tags, and context already in place.

This is where a no-code scraper like ProfileSpider becomes useful. Instead of collecting information manually and fixing it later, you can extract profiles into a structured list, review the output, and prepare it for CRM import in the same workflow. For a broader look at how intelligent data collection supports modern prospecting, read our guide to AI lead sourcing.

What One-Click Extraction Changes

The key change is not just speed. It is that the initial capture becomes more structured from the beginning. Instead of opening profile after profile and copying isolated details, a scraper can detect profile patterns on a page and collect those records into one place.

That matters because it allows teams to:

- capture more than one profile at a time from search results, directories, or speaker pages

- keep source context intact by assigning leads to lists based on campaign or source

- prepare the data before export instead of cleaning everything up after import

For CRM workflows, this is a major improvement. The data arrives in a more usable state, and the sales team does less corrective work later.

Why Local Control Matters in CRM Workflows

Whenever lead data is being prepared for import into your core systems, control matters. Many scraping tools route collected data through external cloud services by default, which can create unnecessary concerns around storage, access, and governance.

ProfileSpider uses a local-first model, storing extracted data in the browser’s IndexedDB so users can review, organize, and export their lead data without default cloud storage in the middle of the workflow.

That local control does not replace legal or compliance obligations, but it gives teams a simpler way to manage what is collected, how long it is kept, and when it is exported into downstream systems.

For a closer look at how this style of tooling works, read our guide on automating web scraping with no-code tools.

How to Move From Source Page to CRM-Ready Lead List

The most useful way to think about this workflow is as a preparation layer before import. You are not just collecting contacts. You are shaping a CRM-ready dataset.

Step 1: Start With a Source That Matches a Real Campaign

Do not scrape first and decide what to do with the leads later. Start with a source page that fits an actual sales motion.

Examples include:

- LinkedIn search result pages: useful when targeting a specific title, geography, or industry

- conference speaker pages: useful when building lists tied to a market or theme

- company team pages: useful for account-based prospecting and stakeholder mapping

- industry directories: useful for vertical-specific outreach campaigns

This matters because CRM quality starts with source quality. If the source page is aligned with a real campaign, the imported data becomes more actionable.

Step 2: Extract the Leads Into a Working List

Once you are on the page, use ProfileSpider to capture the profiles into a list. Instead of thinking of the extraction step as “getting names,” think of it as creating a working dataset for a specific campaign.

That list may include fields such as:

- full name

- job title

- company name

- location

- profile URL

- company domain or related company details

If you are extracting from a multi-profile page, the advantage is especially clear: the dataset stays grouped and ready for review instead of being scattered across separate tabs and notes.

Step 3: Organize Before You Export

This is the step many teams skip, and it is exactly why their CRM imports become messy later. Before export, segment the list inside the scraping workflow.

For example, instead of exporting a generic file, create a list such as:

- Fintech Product Leaders – NYC

- Q4 ABM Target Accounts

- Conference Leads – Data Infrastructure

Add tags or notes where relevant. This creates context that can inform ownership, segmentation, or outreach once the records reach the CRM.

A clean pre-import workflow also helps with de-duplication. Before sending data into your CRM, it is worth making sure you are not creating redundant contact records. For that, read our guide on how to deduplicate lead data.

Importing Scraped Leads Into Your CRM Without Creating Chaos

The import itself is usually the easiest part, provided the preparation was handled properly. Most CRMs already support CSV or Excel imports, so the practical task is not technical integration in the API sense. It is field mapping and record hygiene.

Use a Structured Export Format

ProfileSpider exports lead data into formats such as CSV or Excel, which makes the handoff straightforward for systems like HubSpot, Salesforce, or Pipedrive.

Each row becomes a contact or prospect record, and each column becomes a potential CRM property. The cleaner the structure before export, the easier the import becomes.

Map the Right Fields, Not Just the Obvious Ones

Most teams map only the basics: name, title, company, email. That is necessary, but it is often not enough. A better import includes campaign logic.

In addition to core identity fields, consider mapping:

- source list name to a campaign or custom source field

- tags to labels, lifecycle segmentation, or notes

- company domain to website or account fields

- profile URL to a custom research or reference field

This makes the CRM record more useful after import because it preserves why the lead entered the system in the first place.

Example CSV to CRM Field Mapping

| ProfileSpider Export Field | Example Data | Corresponding CRM Field |

|---|---|---|

fullName |

"Jane Doe" | Full Name or Name |

title |

"Director of Product" | Job Title |

companyName |

"Fintech Innovations Inc." | Company Name |

email |

"jane.d@fintechinnov.com" | Email |

phone |

"+1-555-123-4567" | Phone Number |

linkedinUrl |

"linkedin.com/in/janedoe" | LinkedIn Profile URL or custom source field |

companyDomain |

"fintechinnov.com" | Website URL |

employees |

"501-1000" | Company Size |

Once you define this mapping once, future imports become much faster and more consistent.

Use the CRM to Route and Prioritize, Not Just Store

The point of the import is not simply to archive leads inside your CRM. It is to make them operational.

A strong CRM handoff preserves enough structure for your team to assign ownership, prioritize accounts, launch segmented outreach, and measure source performance without rebuilding context after import.

This is also where lead scoring can become useful. Once structured data is flowing into the CRM consistently, you can prioritize leads based on role, industry, company size, or source quality. If you want to explore that next layer, see this guide to HubSpot lead scoring.

Build a CRM Workflow That Stays Clean Over Time

One successful import is not the goal. The goal is a repeatable process that keeps your pipeline fresh without degrading CRM quality over time.

That means treating web scraping and CRM entry as an ongoing operating process, not a one-off campaign tactic.

Keep Source Selection Tied to Real Outcomes

Not every source page produces equally valuable leads. Over time, your CRM should help answer questions like:

- Which source types convert into meetings most often?

- Which job titles actually move into pipeline?

- Which company segments are worth revisiting?

Those answers should influence future scraping priorities. A CRM-connected workflow becomes more effective when source selection is informed by sales outcomes, not just convenience.

Review Data Hygiene Before It Becomes a Reporting Problem

If duplicate records, weak mappings, or poor segmentation are allowed to accumulate, CRM reporting degrades quickly. It is easier to maintain quality through light review at the export and import stages than to fix a polluted database later.

That means reviewing:

- duplicate contacts before import

- missing fields that affect routing or scoring

- inconsistent source labels

- whether tags and notes are being used consistently across campaigns

A CRM workflow stays useful when the inputs stay disciplined.

Build the Pipeline Responsibly

Connecting scraping workflows to a CRM creates real leverage, but it also requires discipline. Teams should focus on publicly available professional information, stay aware of applicable laws and platform rules, and avoid treating data collection as a shortcut around responsible prospecting.

Keep Data Handling Practical and Controlled

A privacy-aware workflow reduces unnecessary exposure. ProfileSpider’s local-first design helps by letting users collect, organize, and export lead data without default cloud storage in the middle of the process.

- You control the export: data stays local until you choose to move it

- You control retention: lists can be reviewed before entering downstream systems

- You control downstream usage: only the fields needed for CRM workflows need to be imported

That local-first model is not the same as automatic compliance, but it gives teams more direct control over how collected data is handled. For more on why that matters, read our guide to owning your lead data.

Respect the Source Website

Responsible scraping means respecting the websites you use as sources. Review their terms where relevant, avoid aggressive collection behavior, and stay focused on information that is publicly available in a professional context.

The practical rule is simple: collect only what is relevant to a legitimate sales or recruiting workflow, and handle that information in a way that is proportionate, documented, and respectful of both the source platform and the people represented in the data.

If you want a deeper overview of the legal considerations, read our guide on whether website scraping is legal.

Use the Same Model for Recruiting When Relevant

Although this article focuses on CRM workflows for sales, the same structure can support talent workflows built around public professional data. If you are applying these methods to sourcing, read our guide on how to source candidates. For broader hiring strategy, see our guides to what recruitment marketing is and recruiting process improvement.

Common Questions About Web Scraping and CRM Workflows

Teams adopting this workflow usually ask the same practical questions first: how hard is the import, how clean will the data be, and how much technical work is actually required?

Do I Need a Developer to Get Scraped Leads Into My CRM?

No. For most teams, the workflow does not require a developer. The standard pattern is to export a structured CSV or Excel file from the scraper, then use the CRM’s built-in import tool to map the fields.

That means the real work is usually not engineering. It is preparation: choosing the right source, structuring the list, and mapping the right fields consistently.

How Accurate Is CRM-Ready Scraped Data?

The accuracy of scraped data depends on the source page and the extraction quality, but one major advantage is that the information is collected directly from live public sources rather than from stale purchased lists. A structured scraper also reduces the typing mistakes that come with manual entry.

That said, the goal should not be blind trust. The right workflow includes review, light cleanup, and duplicate control before import.

What Happens if the Source Website Changes?

Custom scripts often fail when page layouts change. Tools built for non-technical users aim to reduce that fragility by using more flexible extraction logic. That makes the workflow more resilient and easier to maintain over time than a manual or script-heavy process.

Is This Mainly About Speed?

Speed is part of it, but the bigger advantage is operational consistency. A good scraping-to-CRM workflow helps teams preserve context, improve record quality, and reduce the lag between lead discovery and first action inside the CRM.

That consistency is what makes the process useful week after week, not just on a single import.