The easiest way to scrape a website to CSV without Python is with a no-code Chrome extension that detects structured data for you and exports it in a few clicks. Instead of writing scripts, cleaning HTML, or learning selectors, you can extract profiles, company data, contact details, and lead lists directly from the page and download everything as a CSV file ready for Excel, Google Sheets, or your CRM.

That makes this workflow ideal for recruiters, sales teams, marketers, and researchers who need data fast but do not want to code. What used to require a Python script can now be done in a browser tab with a beginner-friendly workflow.

Why You Don't Need Python to Scrape Web Data for Leads and Research

For years, web scraping was treated like a technical task reserved for developers. If you wanted to collect data from a website, you were expected to learn Python, inspect HTML, handle pagination, and build a custom script just to export a simple list.

That is no longer necessary for most business use cases. Today, if your goal is to build a prospect list, collect candidate profiles, extract company information, or save public website data into a spreadsheet, a no-code browser workflow is often the faster and smarter option.

For business users, the real objective is not to become a programmer. It is to get usable data now. Manually copying names, job titles, emails, company names, or profile URLs from a website into a spreadsheet is slow, repetitive, and difficult to scale. No-code scraping tools remove that bottleneck.

The Problem: Manual Data Collection is Slow and Error-Prone

Imagine you need to build a list of prospects from a company directory, membership site, or professional network.

The manual workflow usually looks like this:

- Open the website in your browser.

- Open a spreadsheet.

- Copy the first company or person’s name.

- Paste the value into the correct column.

- Repeat for title, company, location, profile URL, and contact details.

- Do it again for every result on the page.

This process is tedious and unreliable. It creates copy-paste mistakes, inconsistent formatting, duplicate rows, and missing data. Even a small list of 50 contacts can take hours to assemble manually. That is time better spent on outreach, follow-up, recruiting conversations, or analysis.

The Solution: One-Click Data Extraction with No-Code Tools

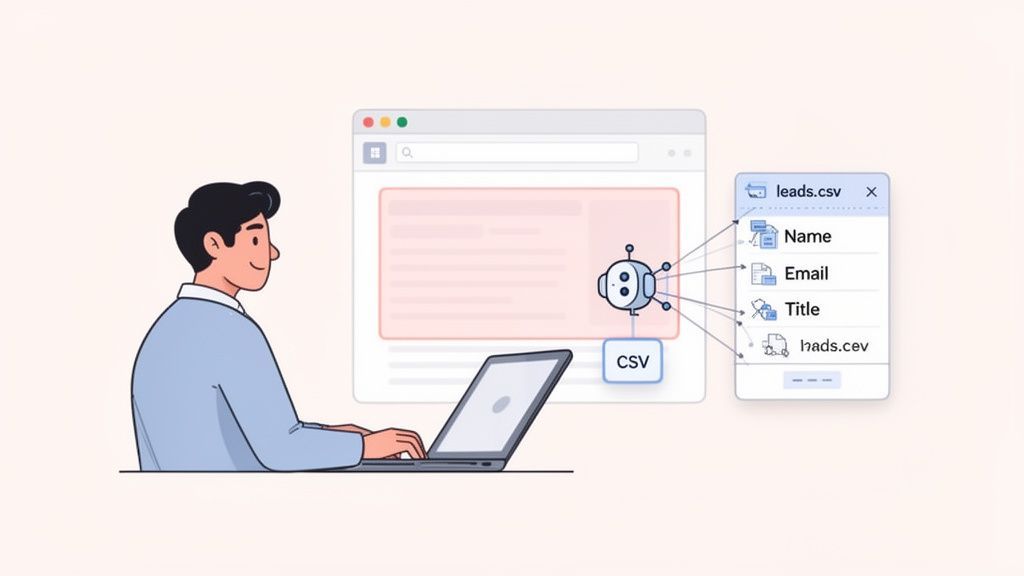

No-code scraping tools have changed the process completely. Instead of writing Python scripts, you use a browser extension that reads the page, identifies repeated data patterns, and turns what it finds into structured rows and columns.

With a tool like ProfileSpider, the workflow is simple:

- Open the page you want to scrape.

- Click the ProfileSpider extension in Chrome.

- Click "Extract Profiles."

- Review the detected data.

- Click "Download as CSV."

That is it. In less than a minute, you can export a clean dataset containing names, job titles, companies, profile links, and contact details, depending on what is publicly available on the page. Instead of scraping with Python, you are using a point-and-click workflow built for non-technical users.

Key Takeaway: You do not need Python to scrape a website to CSV anymore. For many lead generation, recruiting, and research tasks, a no-code Chrome extension is faster, easier, and more practical than building a custom script.

Who Benefits Most from No-Code Website Scraping?

No-code scraping is especially useful for teams that need structured public data quickly and repeatedly.

- Sales Teams: Build prospect lists from directories, company websites, event pages, and professional networks without manual entry.

- Recruiters: Extract candidate names, job titles, companies, locations, and profile links for sourcing workflows.

- Marketers: Gather competitor data, testimonials, pricing, or business listings for research and campaign planning.

- Researchers: Collect public datasets from websites into CSV files for analysis in spreadsheets or BI tools.

The big advantage is not just convenience. It is speed, scale, and consistency. You move from one-by-one copying to repeatable extraction. For a broader overview, see our guide on automating web scraping with no-code tools.

How to Scrape a Website to CSV Without Python: The Beginner-Friendly Chrome Workflow

This is where no-code scraping becomes especially powerful. You do not have to tell the tool which HTML tag to target or build a parser from scratch. A modern browser extension can detect repeated patterns on the page and organize the results automatically.

When you open a page full of profiles, companies, or listings, the extension analyzes the visible content and looks for data points that belong together.

Here is what happens after you click the extension:

- Page Analysis: The tool scans the page for repeating blocks of structured information, such as profile cards, directory listings, or company entries.

- Field Detection: It identifies useful fields like names, titles, company names, email addresses, phone numbers, URLs, and social links where available.

- Structured Output: The extracted information is organized into a preview table so you can review it before exporting.

- CSV Export: You download the results as a CSV file for use in Excel, Google Sheets, or your CRM.

This is why the browser-extension approach works so well for beginners. The browser is already rendering the page for you, so you can extract data from what you see without touching Python or complicated scraping logic.

The Business Value of Speed and Simplicity

When data collection becomes easy, teams can move faster. Instead of waiting on a developer or outsourcing a custom scraper, business users can collect data exactly when they need it.

That has obvious benefits:

- Faster List Building: Generate prospect lists, candidate lists, or research datasets in minutes instead of hours.

- Less Manual Work: Spend less time copying and formatting data and more time acting on it.

- Cleaner Exports: Structured CSV output reduces formatting problems and copy-paste errors.

- More Agility: Sales, recruiting, and marketing teams can gather data on demand without technical blockers.

Key Takeaway: The real win is not just that no-code scraping is easier. It is that it turns website data into an immediate business asset you can sort, filter, enrich, and use for outreach or analysis.

A Practical Example: Scraping Profiles and Exporting Them to CSV

Imagine a recruiter is reviewing a niche job board with 30 software engineer profiles. Each listing includes a name, current company, title, and a link to a personal website or portfolio.

The old workflow would mean opening profiles one by one, copying each field manually, and assembling the spreadsheet by hand.

With ProfileSpider, the recruiter can open the page, click the extension, extract the profiles, and export the dataset immediately. If additional contact details are needed, an enrichment step can help add more usable information, such as emails or social links, when publicly available.

The result is a clean CSV file that can be opened in Excel or Google Sheets, reviewed, and used for sourcing outreach in a fraction of the usual time. Our complete ProfileSpider deep-dive in our guide explains these advanced capabilities in more detail.

Other No-Code Methods to Scrape a Website to CSV

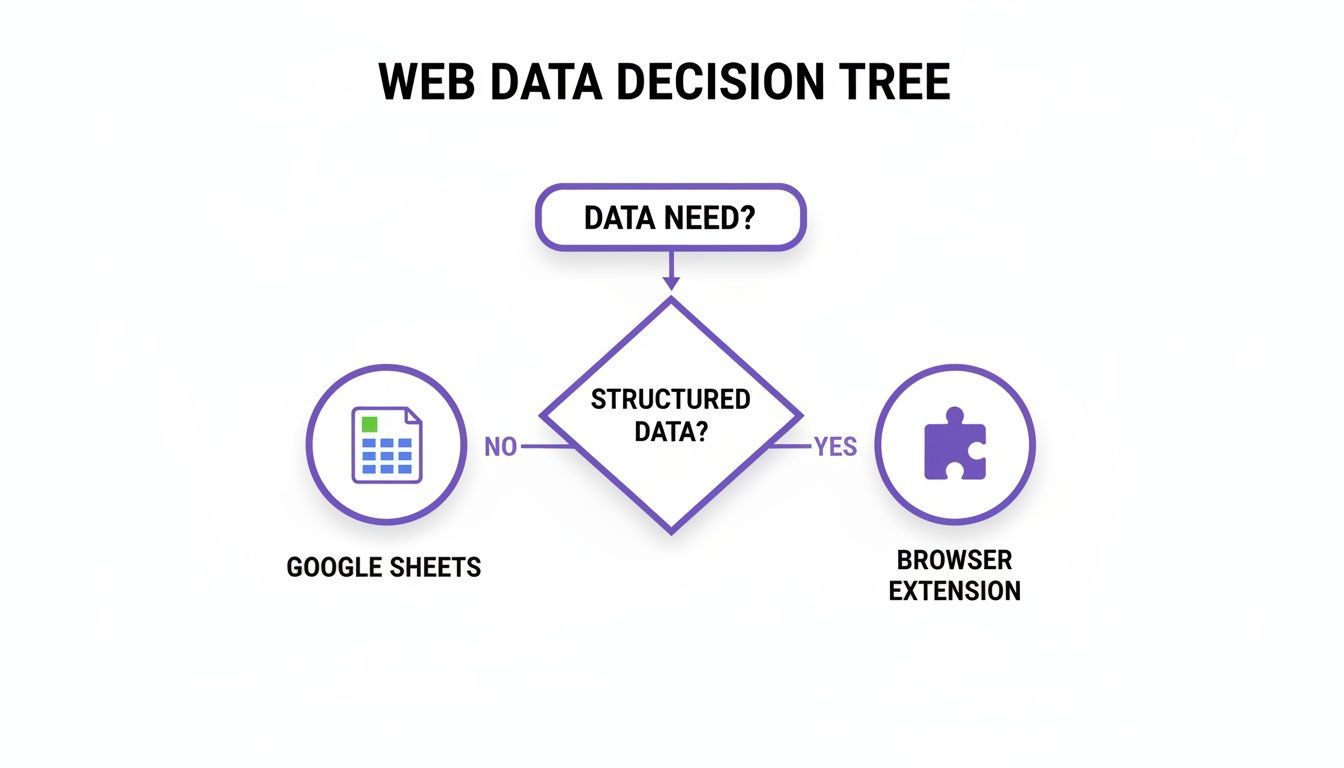

While a one-click Chrome extension is often the fastest option for extracting profiles and business data, it is not the only no-code method. Depending on the type of page and the complexity of the task, you may prefer a spreadsheet formula, a manual browser method, or a dedicated scraping platform.

The best choice depends on the data source. If you need to extract repeated profile or company data from a visible web page, a browser extension is usually the most direct route. If the site is very simple and static, a spreadsheet-based method may be enough.

1. Using Google Sheets for Simple Data Tables

Google Sheets includes a function called =IMPORTXML that can pull data from some simple websites.

The formula looks like this: =IMPORTXML("URL", "XPath_query")

- URL: The page you want to pull data from.

- XPath_query: The instruction that tells Google Sheets which element or data point to extract.

This can work well for simple tables, lists, or static page elements.

Challenge: It usually breaks on modern, dynamic websites and it still requires some technical understanding of XPath. It is useful for lightweight data grabs, but not ideal for profile extraction, lead generation, or more advanced list building.

2. Browser Developer Tools for Copying Simple HTML Tables

If the data already appears in a clean HTML table, you can sometimes copy it using your browser’s built-in developer tools.

Here is the basic process:

- Right-click the table and choose "Inspect."

- Find the

<table>element in the HTML. - Right-click it and choose "Copy" > "Copy element."

- Paste the HTML into a file and open it in Excel or Google Sheets.

Challenge: This is a manual workaround, not a scalable method. It only works on simple table-based pages and does not help with repeated profile cards, infinite scroll, or dynamic content.

3. Dedicated Web-Based Scraping Platforms

Some web-based scraping tools let you create repeatable extraction workflows by selecting elements visually. These platforms can be useful for recurring projects or larger-scale scraping tasks.

Challenge: They often have a steeper learning curve, can be more expensive, and are usually site-specific. They are powerful, but less convenient for quick, ad-hoc extraction than a one-click browser extension. The general idea fits into the broader movement toward no-code automation, where non-technical users create repeatable workflows without coding.

Key Insight: If your main goal is to scrape visible website data into a CSV file quickly, especially for profiles, people, and company information, a no-code Chrome extension is usually the fastest and most beginner-friendly option.

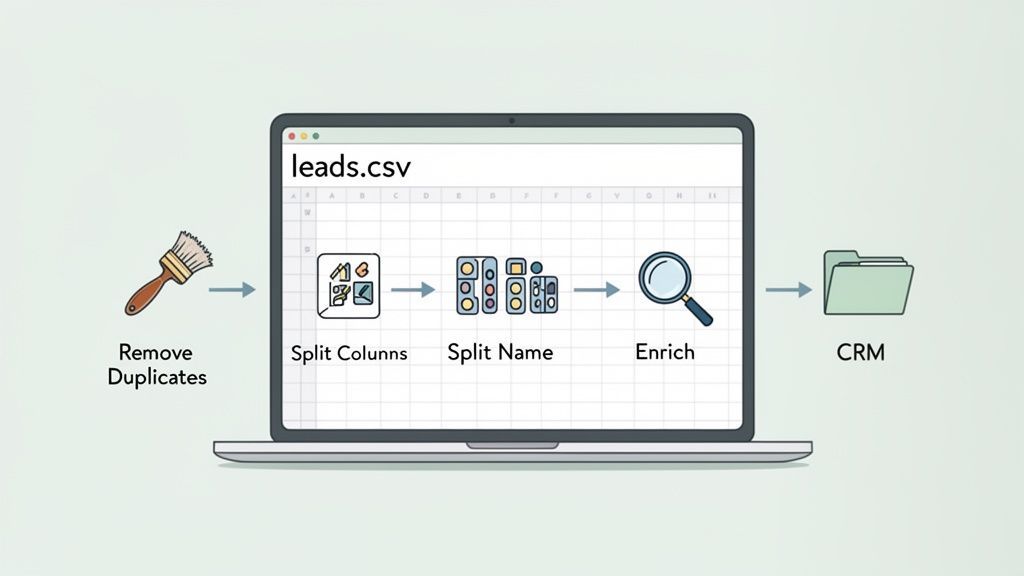

From CSV Export to Spreadsheet-Ready Data

Exporting a CSV file is only the first step. To turn scraped data into something useful for outreach, reporting, or CRM imports, you usually need a short cleanup pass.

The good news is that CSV files are easy to work with in both Google Sheets and Microsoft Excel. Once your file is open, you can sort, filter, deduplicate, and reformat the data for your workflow.

Essential Data Cleaning and Formatting Tasks

Here are a few of the most common cleanup steps after scraping a website to CSV:

- Remove Duplicates: Eliminate repeated entries before importing the list into a CRM or outreach tool.

- Split Columns: Separate a full name into first and last name fields so you can personalize emails or sequences.

- Normalize Company Names: Clean inconsistent naming so records are easier to group and analyze.

- Standardize Titles and Locations: Make the dataset more consistent for filtering and segmentation.

- Check Missing Fields: Identify which rows need enrichment before outreach begins.

Key Takeaway: A CSV export is valuable because it is flexible. You can open it in Excel or Google Sheets, clean it in minutes, and turn a raw website scrape into a reliable working list.

From a Basic CSV File to an Enriched Lead List

Sometimes your scrape gives you the visible basics: name, title, company, and profile URL. That is useful, but not always enough to start outreach. The next step is enrichment.

With ProfileSpider, you can go beyond the initial scrape and add more publicly available details where possible, such as contact information and social links. That turns a basic exported list into a stronger prospecting or sourcing asset.

A simple workflow looks like this:

- Initial Scrape: Extract names, titles, companies, and profile URLs from the page.

- CSV Export: Download the structured file for review.

- Enrichment: Add missing fields such as emails or social links where available.

- CRM or Outreach Import: Upload the cleaned data into your sales or recruiting workflow.

This is where no-code scraping becomes especially practical for business teams. You are not just collecting data. You are building a usable list that can support prospecting, hiring, or market research. For more on that workflow, read our guide on how to feed your sales pipeline automatically with web scraping.

Scraping Data Ethically and Responsibly

Knowing how to scrape a website to CSV without Python is useful, but it should always be done responsibly. Good scraping practices protect websites, reduce the risk of blocks, and help you stay focused on appropriate public data.

It is worth understanding the basics of ethical data practices before scraping at scale. In practical terms, that means respecting the website, following its rules, and avoiding sensitive or private information.

Follow the Website’s Rules

Before extracting data, review the site’s policies and basic instructions for automated access.

- Terms of Service: Some websites explicitly restrict automated data collection for commercial use.

robots.txt: This file provides guidance for crawlers and automated tools about which paths should or should not be accessed.

A safe rule is to focus on publicly visible business information, such as company listings, public profiles, directories, and similar content. Avoid private, personal, copyrighted, or login-protected data.

Key Insight: Responsible scraping starts with restraint. Just because data is visible does not mean every use of it is appropriate.

Scrape at a Reasonable Pace

Websites can be disrupted if tools make requests too aggressively. That is why considerate scraping matters. A slower, more human-like pace helps reduce server load and lowers the chance of being blocked.

Many no-code tools, including browser-based tools, are designed to avoid overly aggressive behavior. For a practical checklist, see our lead scraping compliance checklist.

Keep Control of Your Data

Another important consideration is where your scraped data is stored. Some tools upload and store extracted data in the cloud. Others, like ProfileSpider, are designed around a more local workflow.

That matters for several reasons:

- More Control: You decide where the exported data goes after extraction.

- Better Privacy: Keeping files local can reduce unnecessary exposure to third parties.

- Simpler Handling: CSV exports that go straight to your machine are easy to review, clean, and import into your own systems.

This local-first approach is especially helpful for teams working with lead lists, candidate data, and other business datasets that require careful handling.